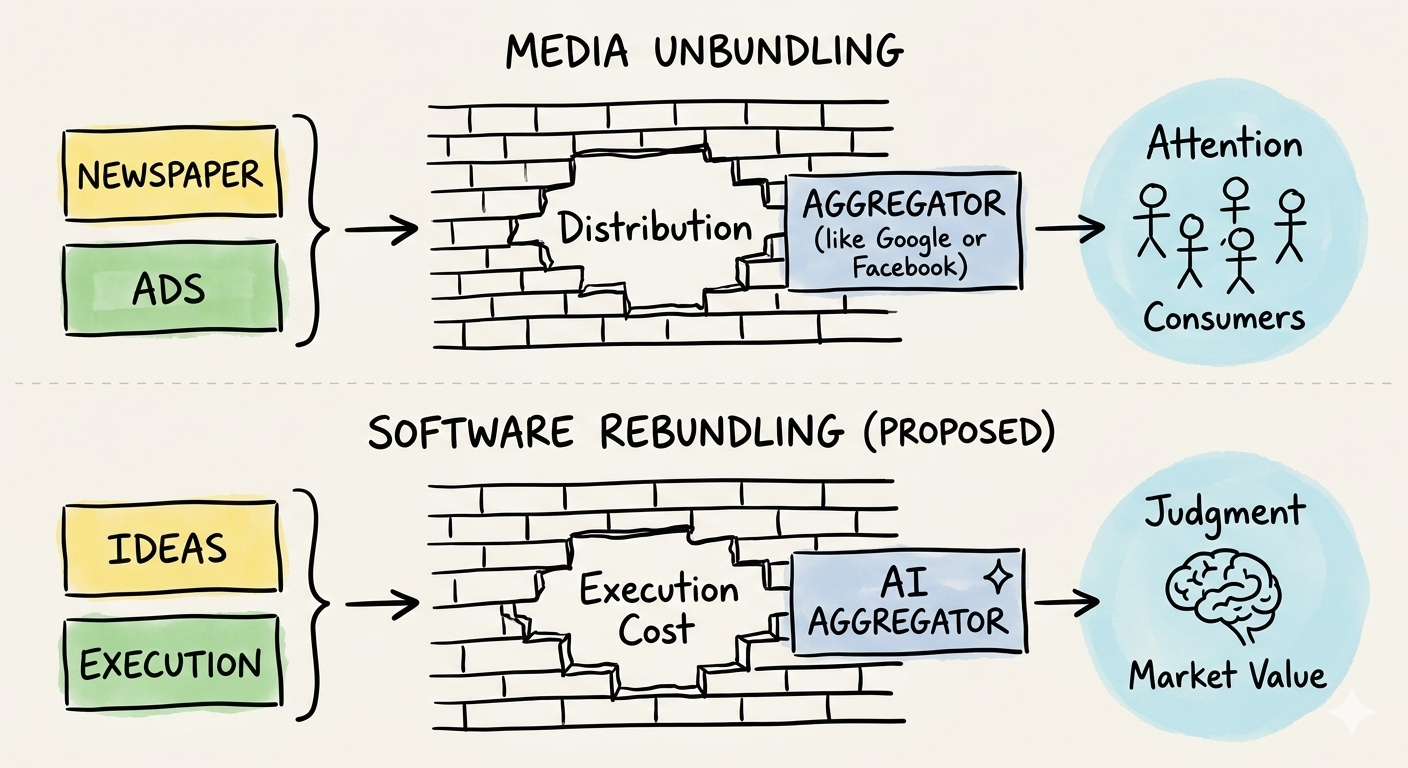

In 2017, Ben Thompson explained how the Internet unbundled media by collapsing distribution costs to zero. Eight years later, AI is doing the same thing to software development, collapsing execution costs and restructuring who builds software, how teams are organized, and which skills actually matter.

The TL;DR for people who scroll fast:

- The Internet unbundled media. AI is now unbundling software teams. Same playbook, different industry.

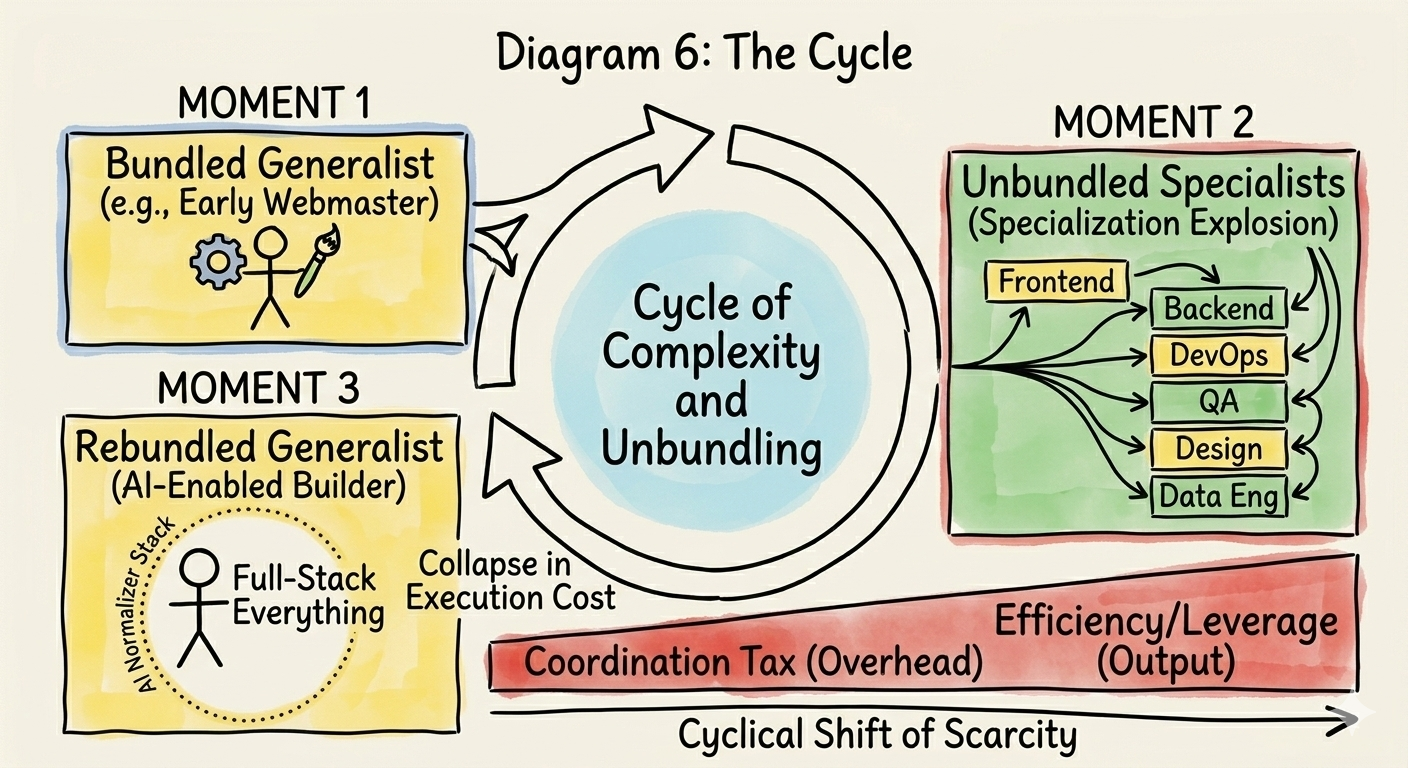

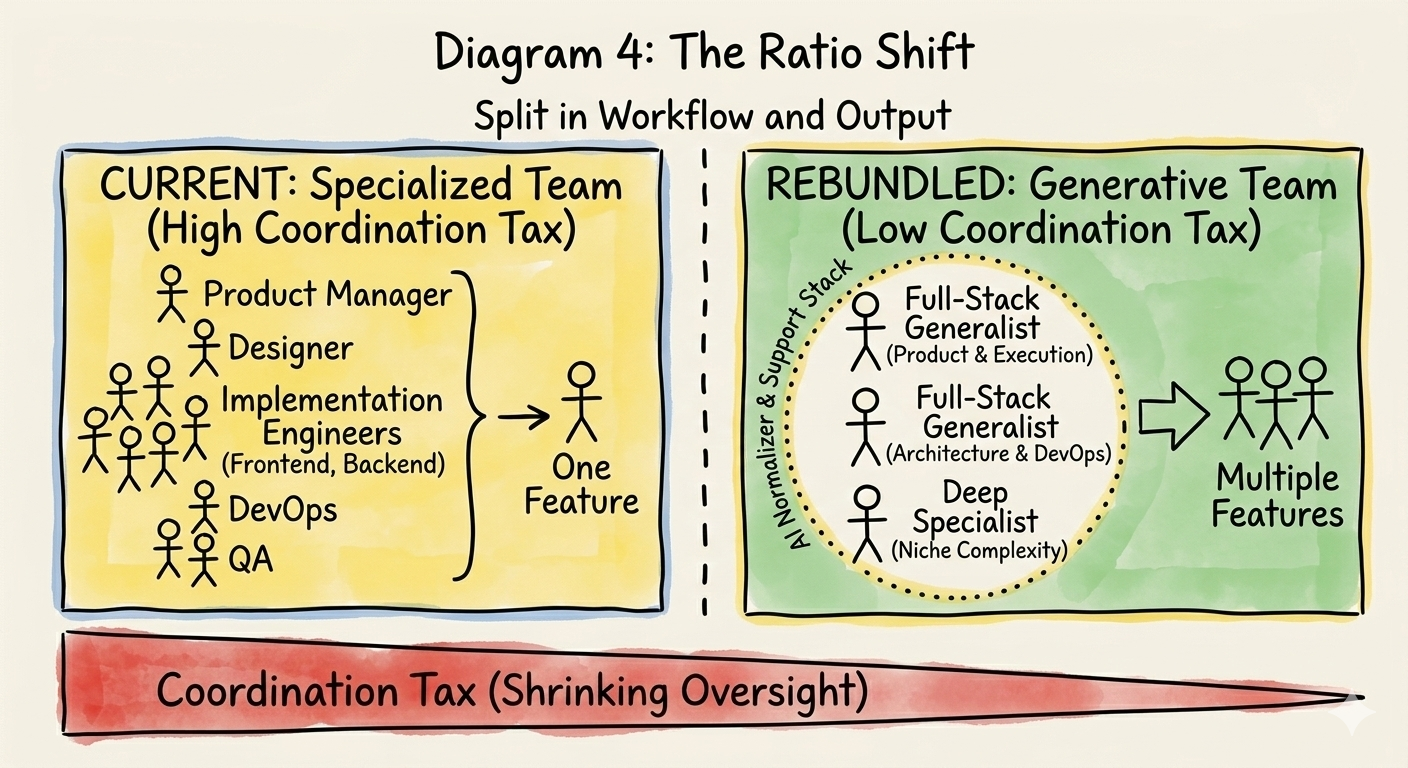

- We spent 20 years splitting "developer" into 15 job titles. The coordination tax got enormous. Now AI is collapsing those boundaries back together.

- One person just built what used to take a funded five-person team. The "full-stack everything" builder is real and multiplying.

- The 10-person cross-functional team compresses to 3. The layer that disappears first? The massive middle of implementation engineers.

- Quality doesn't tank because a new "normalizer stack" (design systems, type-safe APIs, AI code tools) now enforces what human specialists used to enforce manually.

In January 2017, Ben Thompson published "The Great Unbundling" on Stratechery, one of his most enduring frameworks. The argument was clean: the Internet didn't just disrupt media. It collapsed the cost of distribution to zero, unbundling the packages that distribution economics had held together, then rebundling them around new aggregators.

Eight years later, the same structural logic is playing out in software development. And almost nobody is talking about it in these terms.

The Distribution Parallel

Thompson's original insight about media was that bundling was never about consumer preference. It was about distribution economics. A newspaper wasn't a bundle of sections because readers wanted sports next to classifieds. It was a bundle because printing presses and delivery trucks had massive fixed costs that demanded scale. The Internet made distribution free, the bundle fell apart, and new aggregators (Google, Facebook, Netflix) rebundled attention on their own terms.

Software development has its own version of distribution cost. We just call it something different: execution.

For two decades, the dominant bottleneck in building software has been the cost of turning ideas into working products. Not the ideas themselves. The execution. Writing the code, configuring the infrastructure, designing the interfaces, testing the edge cases, deploying to production. This execution bottleneck shaped everything: how we hire, how we organize teams, how we build careers.

And now AI is collapsing that cost toward zero.

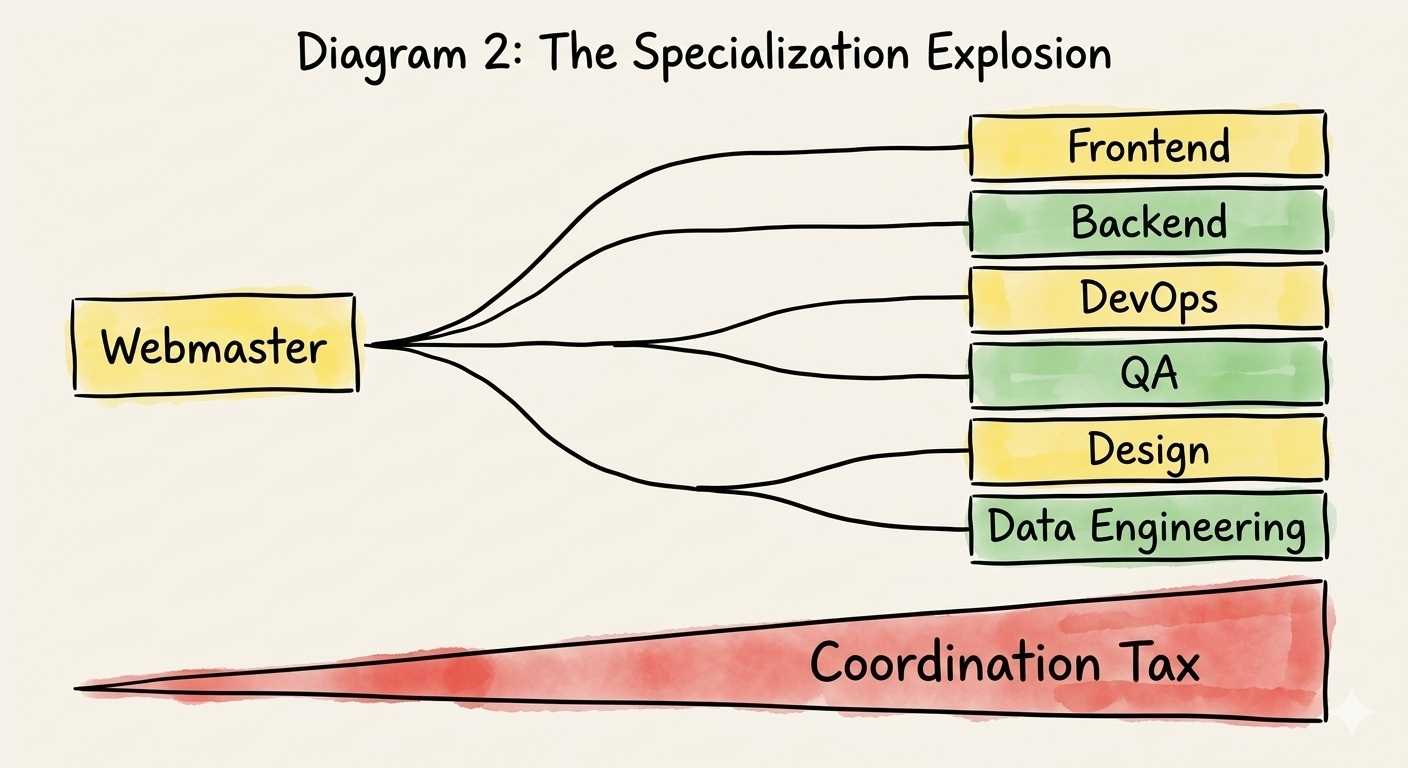

Act 1: The Great Specialization

To understand where we're going, you have to understand how we got here.

In the early web era, software was bundled by default. One person, or a tiny team, did everything. You wrote the HTML, the backend logic, the database queries. You designed the interface in Photoshop and uploaded it via FTP. The "full-stack developer" wasn't a trendy title; it was just what building software meant.

Then complexity grew. And grew. And the rational response was specialization.

Frontend separated from backend. Backend split into services. Infrastructure became its own discipline. Design split into UX research, interaction design, and visual design. Quality assurance became a team. DevOps emerged. Then SRE. Then platform engineering. Then data engineering. Each specialty developed its own tools, its own conferences, its own hiring pipelines, its own career ladders.

This was not a mistake. It was a rational response to genuine complexity. The problem space of modern software (distributed systems, real-time interfaces, security, accessibility, mobile, performance) genuinely demanded deep expertise in each domain.

But specialization comes with a cost that compounds silently: coordination overhead.

Every boundary between specialties creates a handoff. Every handoff requires communication. Communication requires meetings, documentation, tickets, sprints, standups, design reviews, code reviews, QA cycles. The machinery of coordination (Jira boards, Confluence pages, Figma handoffs, Slack threads) became so pervasive that we stopped seeing it as overhead. It was just "how software gets built."

At some point, in many organizations, the coordination cost began to rival, or exceed, the cost of the actual work being coordinated. A feature that one skilled person could reason about end-to-end now required a PM to write the spec, a designer to mock it up, a frontend engineer to build the UI, a backend engineer to write the API, a DevOps engineer to deploy it, and a QA engineer to test it. Six people, four handoffs, three meetings, two sprints.

The bundle held because there was no alternative. Execution was hard enough that you needed specialists at every layer.

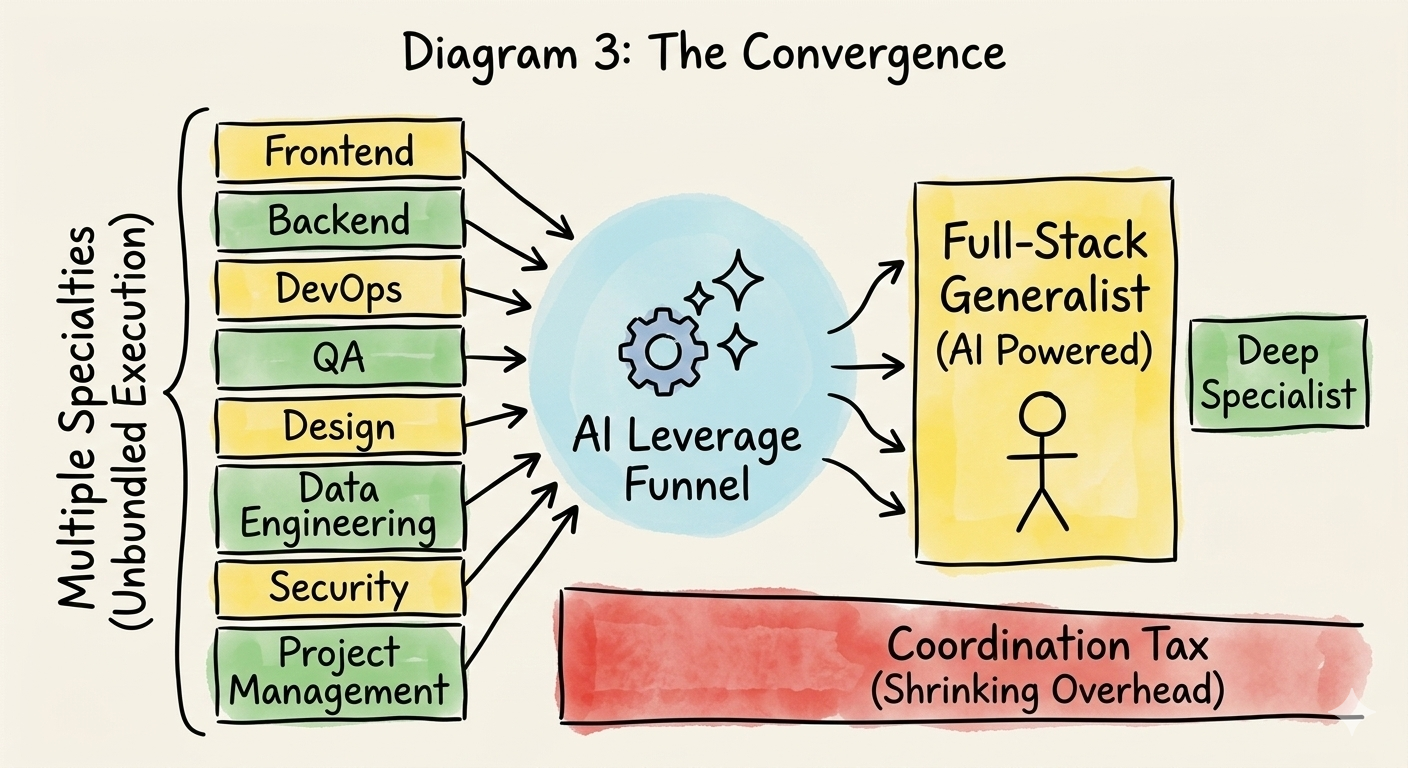

Act 2: The Convergence

AI tools are doing to execution cost what the Internet did to distribution cost: collapsing it.

This isn't the simplistic "AI replaces developers" narrative. Something more structural is happening. The boundaries between specializations (boundaries that existed because execution in each domain was genuinely hard) are dissolving.

A designer who couldn't ship code can now ship code. A backend engineer who produced ugly interfaces can now produce polished UIs. A PM who could only write specs can now prototype working applications. Not because these people suddenly acquired deep expertise, but because AI tools have collapsed the execution barrier enough that a competent generalist can traverse what used to require a cross-functional team.

This is the emergence of what I'll call the "full-stack everything" person. Someone whose core skill is not any single specialization, but the ability to move fluidly across the entire stack of building a business. They think in terms of the whole problem, and AI lets them execute across all the layers that used to be gated by specialist knowledge.

I watched someone do this recently. One person, operating alone, built a platform to ingest live futures order flow. They wired up a real-time websocket pipeline to parse the data, built a complex interactive charting frontend to visualize volume profiles and footprint imbalances, integrated secure tiered billing, and simultaneously launched the targeted Meta ad campaigns to drive the initial cohort of users. Not a team. Not an agency. One person with an AI coding environment open. Five years ago, that output would have required a dedicated data engineer for the pipeline, a frontend developer for the complex charting, a DevOps engineer to secure the infrastructure, and a performance marketer. It would have required a venture-backed seed round just to build the MVP. Today, it is a single person with deep domain knowledge and the right leverage.

Thompson observed the same pattern in media. The unbundled world of specialist beat reporters, section editors, layout designers, and distribution managers collapsed into a smaller set of generalist content creators who could write, shoot, edit, publish, and distribute, all themselves. The survivors at the specialist end weren't the beat reporters covering routine stories; they were the investigative journalists and analysts whose value was irreplaceable judgment, not execution.

The Ratio Shift

Here's where it gets concrete.

A typical product team today looks something like: 1 product manager, 1 designer, 6-8 engineers, plus shared DevOps, QA, and data resources. Call it a 10-person team to ship a feature set.

The rebundled team compresses this dramatically. Maybe 2-3 full-stack-everything generalists who each own entire product surfaces end-to-end, plus 1 deep specialist for the genuinely hard problems: the distributed systems architect, the ML engineer working on novel approaches, the security expert navigating complex compliance requirements.

The layer that compresses most is the massive middle: implementation engineers whose primary job is translating specs into code. Not because they're bad at their jobs, but because that translation (the conversion of "here's what we want" into "here's working software") is exactly what AI tools are best at collapsing.

The math is uncomfortable but worth staring at. If a 3-person rebundled team can produce what a 10-person specialized team produces today, and early evidence from AI-native teams suggests this isn't far off, then the industry doesn't need 70% of its current implementation workforce. Not immediately, not all at once, but directionally. And "directionally" is cold comfort when it's your career in the compression zone.

Act 3: The New Aggregators

Every rebundling needs its aggregators. The platforms that capture the value freed up by the structural shift.

In media, the aggregators were Google, Facebook, and Netflix. They didn't create content. They captured attention, the scarce resource that content creators competed for once distribution was free. Content got commoditized; curation and distribution became the power position.

In software, a parallel class of aggregators is emerging. They don't write your software. They capture and direct execution, the resource that becomes abundant once AI collapses its cost.

Foundation model providers (OpenAI, Anthropic, Google) are the platform layer. They commoditize raw code generation, the way the Internet commoditized raw content distribution. The more code generation becomes a commodity, the more builders depend on these platforms, and the more the platforms accumulate leverage.

AI-native coding environments (Cursor, GitHub Copilot, Windsurf) are the interface layer. They sit between the builder and the foundation model, shaping how execution is accessed and directed. They're the Google Search of this metaphor: not the content itself, but the way you navigate to it.

The structural logic is identical to Thompson's: commoditize the complement, capture the scarce resource. The foundation models commoditize code execution. The coding environments capture the workflow. And the value that's freed up flows to whoever controls the judgment that directs it all.

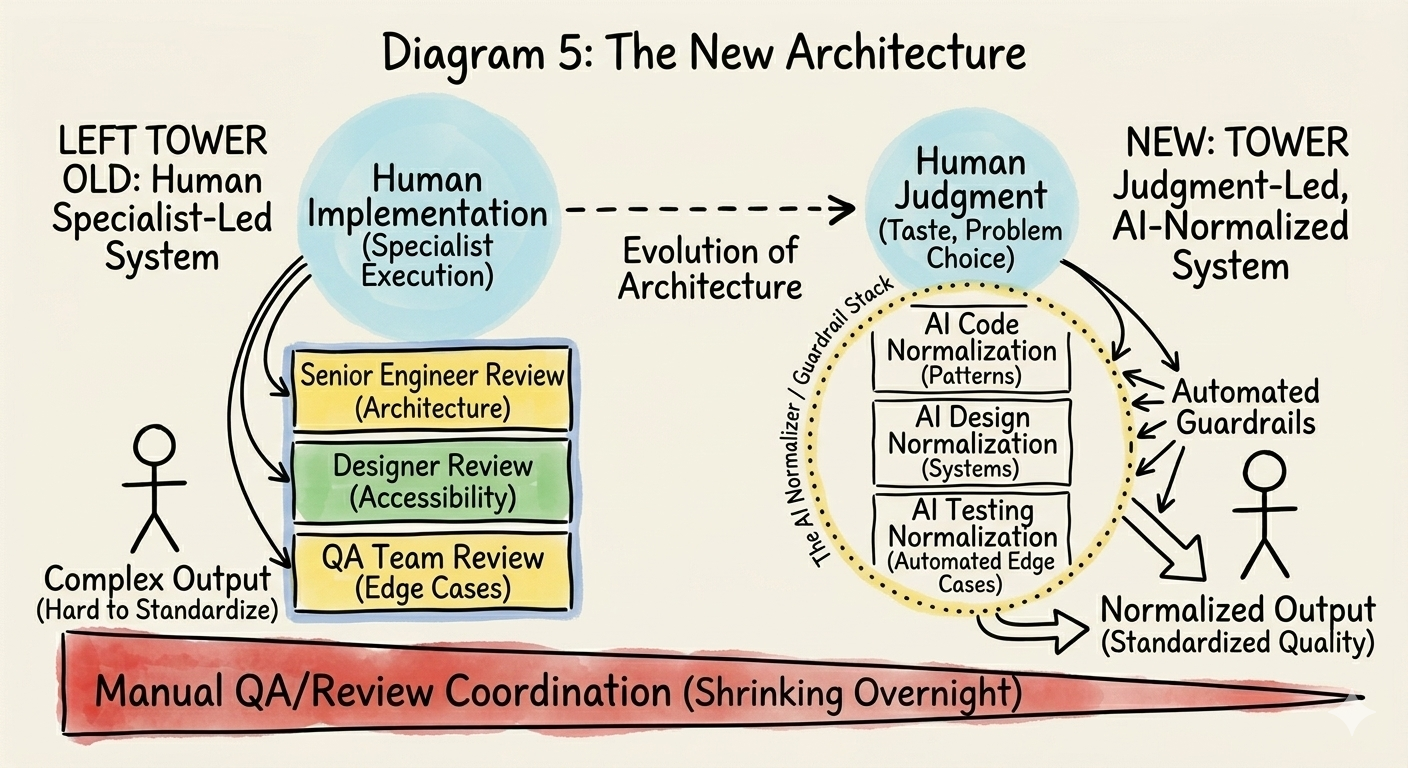

Act 4: The Normalizer Stack

But here's the objection any experienced engineer will raise: if generalists are doing the work of specialists, who's catching the mistakes?

This is the right question, and the answer is architectural, not aspirational.

The rebundled world doesn't work by hoping generalists produce specialist-quality output through sheer taste. It works because a new stack of normalizer layers is encoding the quality standards that human specialists used to enforce manually. These layers are already here:

- Design systems (like Vercel's v0) that encode visual and interaction standards so thoroughly that a developer produces consistent, accessible UI without needing a designer's eye.

- Type-safe API layers (like tRPC or GraphQL) that make entire categories of interface bugs structurally impossible.

- AI-powered code environments (like Cursor) that catch architectural anti-patterns in real time during the build process.

- Infrastructure-as-code platforms that encode deployment best practices as automated defaults.

In the old world, human specialists were the quality gates. The senior engineer caught the architectural mistake in code review. The designer flagged the accessibility issue in the mockup review. The QA engineer found the edge case in testing.

In the new world, these normalizer layers serve as those gates. Not perfectly, not yet, but well enough, and improving on a curve that's hard to bet against.

This is the crucial structural insight: the rebundled world isn't a free-for-all where generalists produce sloppy work. It's a world where quality enforcement has moved from human specialists to architectural layers, and the humans are freed up to focus on the thing that's actually scarce: deciding what to build and why.

The Value Migration

The value chain of software is inverting.

For twenty years, the hierarchy was: ideas are cheap, execution is expensive. Everyone has app ideas; few people can build them. This created an economy that valued execution above almost everything else. Hiring optimized for it. Compensation reflected it. Status within engineering culture was built on it.

That hierarchy is flipping. When execution cost collapses, execution becomes abundant. What becomes scarce, and therefore valuable, is everything execution isn't:

- Seeing what needs to exist. The ability to identify problems worth solving before they're obvious. This isn't "product management" in the current sense of writing user stories. It's the deeper skill of understanding a domain so thoroughly that you can see what's missing.

- Taste. The ability to make thousands of micro-decisions about what a product should feel like. Decisions that can't be specified in a prompt because they require holistic judgment about the user experience.

- Architectural vision. Not the implementation of architecture, but the judgment about which architecture to choose. Understanding the tradeoffs deeply enough to make decisions that compound positively over years.

- Directing AI tools effectively. This is the new meta-skill. The ability to decompose problems, prompt effectively, evaluate outputs, and orchestrate AI tools across a complex workflow. It's closer to film directing than coding.

Here's the uncomfortable implication: most of the current hiring infrastructure is optimized for exactly the layer that's compressing. Leetcode interviews test algorithmic implementation. Take-home projects test CRUD execution. Whiteboard exercises test the ability to translate a spec into code under pressure. These are all assessments of execution skill, the very thing AI is commoditizing.

The companies that adapt fastest will find entirely different hiring signals: taste portfolios, product instinct exercises, architectural judgment interviews, "build something from zero" assessments where the evaluation criteria isn't the code. It's the choices.

The Counterargument, and Why It's Half Right

The strongest counterargument to this thesis is: "Specialization exists because problems are genuinely complex, not just because execution is hard. You can't collapse a distributed systems expert into a generalist with AI tools."

This is half right, and the half that's right matters.

AI collapses execution complexity, the difficulty of turning a known approach into working code. It does not collapse problem complexity, the difficulty of figuring out the right approach in the first place.

A distributed systems architect's value was never primarily in writing the code for a consensus algorithm. It was in knowing when you need a consensus algorithm, understanding the tradeoffs between consistency models, and making judgment calls about system design that play out over years. That judgment doesn't compress. If anything, it becomes more valuable as the execution layer commoditizes, because the consequences of bad architectural judgment multiply when you can execute on bad decisions faster.

The specialists who thrive in the rebundled world are the ones whose value was always in judgment, not implementation. The ones who are in trouble are the ones who wrapped judgment-level compensation around what was fundamentally execution-level work.

What Rebundles Around What?

Thompson's framework always has a clean center: media rebundled around attention, captured by aggregators. What does software rebundle around?

The answer is judgment. Amplified by AI-native tools, directed through the new aggregator platforms, and normalized by architectural layers that enforce quality without requiring human specialists at every gate.

The rebundled unit isn't a platform or an aggregator. It's a new type of team: small, generalist-heavy, judgment-rich, operating on AI-powered architectural foundations that encode the quality standards specialists used to enforce manually. These teams will be staggeringly productive by current standards, and the companies built around them will have structural advantages that specialized-team-based companies can't match.

Which, if you think about it, is how software started. A few people with good taste, building something they believed in. We just went through a twenty-year detour of specialization to handle complexity that machines can now handle for us.

The bundle is reforming. But this time the question isn't whether it happens. The economics are too clear for that. The question is what happens to the millions of people whose careers were built in the middle of a bundle that no longer needs them. The specialization era created an enormous professional class of implementation engineers, coordination specialists, and process managers. The rebundling doesn't have room for most of them, at least not in their current roles.

Thompson's media unbundling had the same aftermath. The number of working journalists in America dropped by half. The survivors weren't the ones who wrote the most competent articles. They were the ones whose judgment was irreplaceable.

Software's version of that reckoning is just beginning.